It seems simple, but it is not.

How to properly assign resources requirements and limits to a HTTP dockerized microservice running on kubernetes?

Good question, right?

Well, as you can imagine there is not a single answer to it. But there is a strategy you can follow. This is not a “book definition” what I mean with this is that there might be another way of sizing resource requirements, but, so far, I’ve been using this method successfully.

It is a delicate balance between the hardware size on which your containers will run (ie: k8s nodes) and how many requests the container itself can handle.

The idea is to find that delicate balance so our pods can be scheduled on the nodes, without wasting precious resources that can be used for other workloads.

So, how do we do that?

A simple method:

Let’s take a simple microservice as an example: a nodejs express REST API which talks to a MongoDB.

We will be doing: GET /example

Run the container on your local machine or a k8s cluster, measure the idle resource usage:

- CPU: 1%

- Memory: 80Mb

Using a load testing app like Apache JMeter, we fire up 100 concurrent requests in 1 second… measure the resource spike using docker stats or similar:

- CPU: 140%

- Memory: 145Mb

We exceeded one CPU core, and that is not good, so we reduce the number of parallel requests to 70…

- CPU: 93%

- Memory: 135Mb

Now, we have something to work with. We can assume that a single instance of our app, can handle up to 70 requests per second.

Setting Kubernetes resources:

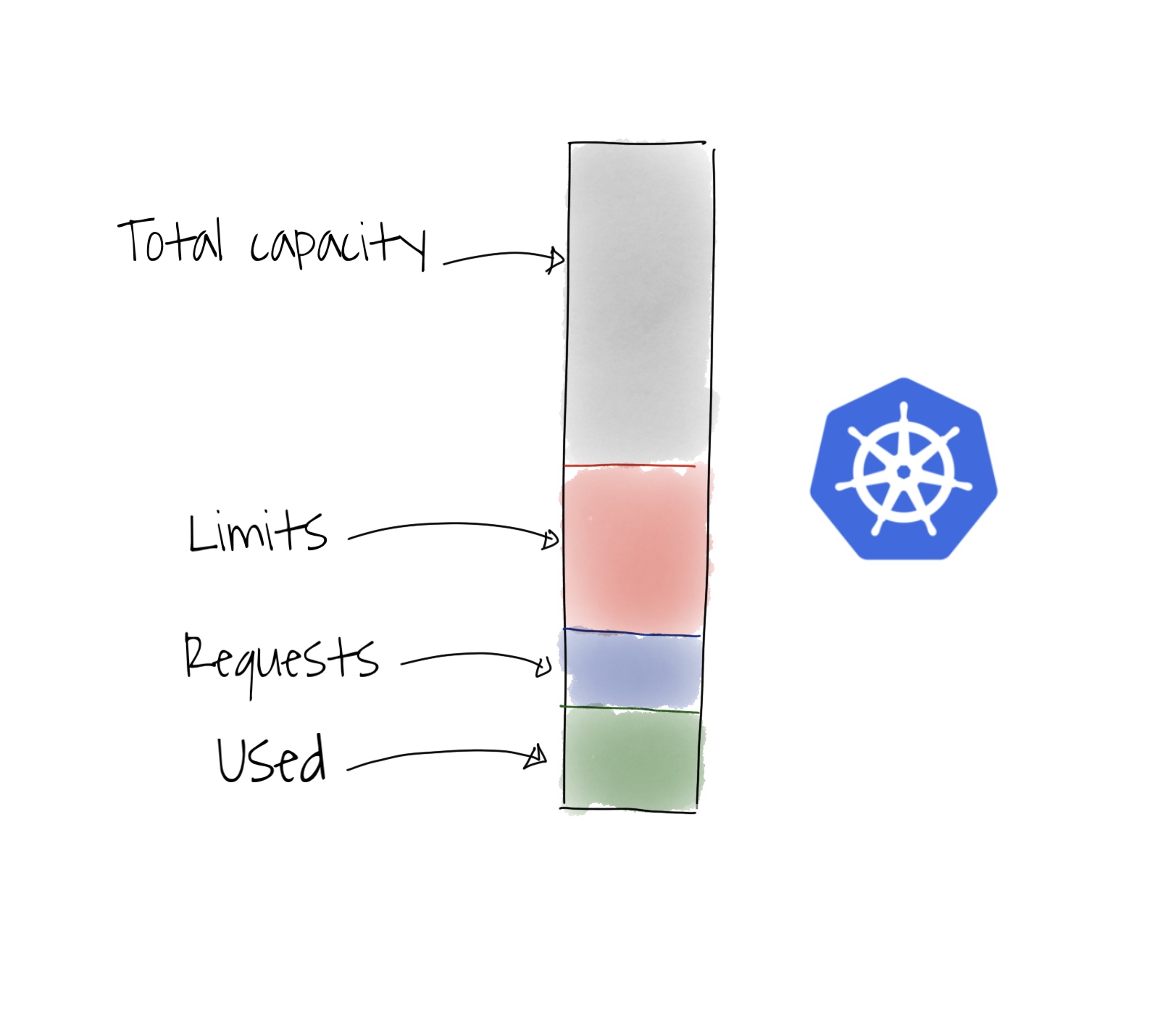

According to how resource limits and requests are set in Kubernetes, we can say that it is safe to consider that our resource requests can be:

- CPU: 0.1

- Memory: 100Mb

And we can limit the resources to:

- CPU: 1

- Memory: 150Mb

That will ensure that we can handle at least, 70 requests per second, per replica.

Disclaimer: as I said before, this is a simple approach, we are not considering several things, for example, node resources, MongoDB capacity…